How the American AI Industry Collapses

My contrarian bear case

Introduction

Predictions about the future of AI tend to fall into two extreme categories: either AI will turn superintelligent (e.g. AI 2027) or it’s a financial bubble waiting to burst (e.g. Ed Zitron). A more measured third approach is that ‘AI is a normal technology‘ as proposed by Arvind Narayanan and Sayash Kapoor - AI will neither turn into Skynet, nor will it turn out to be essentially useless.

There is also a fourth option that I didn’t consider before I read a recent post by Kevin Kelly. What if the current uncertainty and confusion we are experiencing about AI’s economic impact and future capabilities persists?

Kelly describes the scenario:

“AI continues to surprise us at its core. As AI continues to evolve rapidly there will be no resolution to these questions in 3 years. By 2029, we still won’t know if AGI is possible, we can’t tell if employment is disrupted, and we still can’t say if it is worth the huge investment. I don’t mean AI progress stalls. I mean, AI continues to advance, but the new stuff doesn’t answer the old questions, it only expands our ignorance because the new is new in a new way. We have to alter our ideas (and measurements) of employment, we have to amend our concepts (and measurements) of the economy, and we have to shift our ideas of what AI even is.”

The ‘sustained uncertainty’ scenario may be the worst outcome of all. We can handle success, we can handle failure, but not knowing what we know and don’t know is a frightening state to be in and the economy has a low tolerance for uncertainty and doubt. The whole data-driven economy is based on the assumption that we can accurately predict future outcomes and behaviors given enough data.

Kelly calls it “The Age of Ambiguity”. He emphasizes that it’s not prediction, but a possibility we should consider and prepare for. I completely agree. At the same time, I want to ask: what is the source of all this ambiguity and confusion? Could it be that the ugly truth is staring right at us, while we are distracted by the mechanical applause for tech leaders and American entrepreneurship on social media?

It sure seems possible. In my view, there is a non-insignificant chance that the American AI industry could collapse. Whether it happens this year, in three years or later is impossible to predict. Traditional media overemphasizes misleading statements by tech CEOs and corporate marketing stunts, while American algorithms amplify well-presented hype to increase profits and engagement. Most of all, the technological and financial elites of America have joined forces in a bet that says artificial intelligence is real, or soon will be. It’s the most expensive bet in the history of capitalism and being wrong is not an option. Ultimately, it’s still worth considering if they are betting on the impossible.

Below, I will lay out my arguments in simple terms. Your job is to tell me where the analysis is wrong.

‘American AI’

One defining trait of ‘American AI’ is that no one is allowed to see how the models work under the hood. Officially, that is in the consumers’ best interest. Open-source AI could be misused by terrorists to create biological weapons such as incurable diseases or advanced cyberattacks by exploiting critical software bugs at scale. Additionally, as American AI inches closer towards ‘superintelligence” or ‘AGI’ there’s a chance that ordinary users and businesses are denied access to new models.

The secrecy surrounding American AI is not just a competitive moat for the companies. It creates an aura of mystique feeding the narrative that American AI labs are building something brand new and exceptional. In truth, American AI needs to be exceptional and deliver exceptional returns.

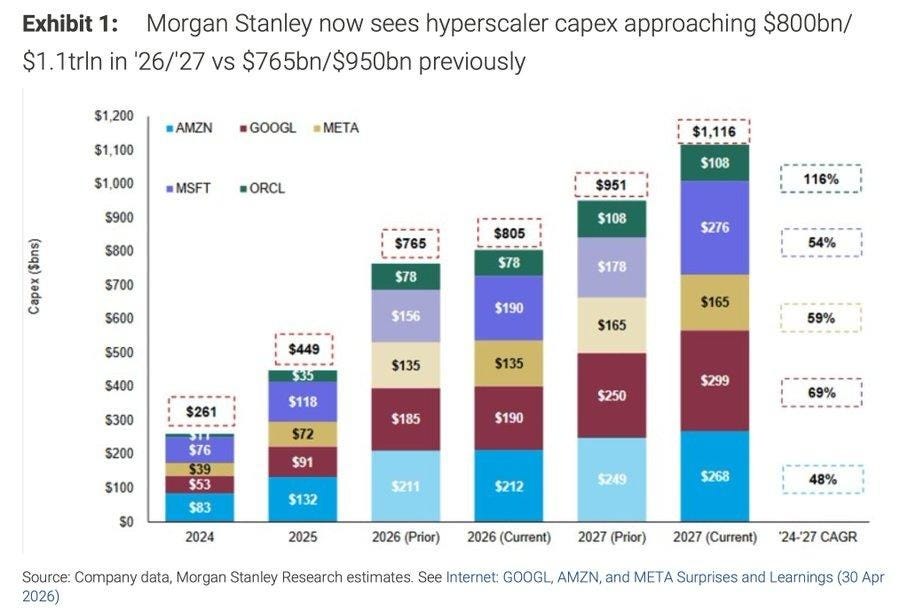

The American cloud giants Amazon, Alphabet, Meta, Microsoft, and Oracle are projected to spend more than $1.1 trillion by 2027 to meet the demands of AI infrastructure. These investments alongside the inflated value of AI-related stocks are central to keep up the rampant growth of S&P 500 and Dow Jones (and presumably what’s left of Trump’s popularity with business leaders).

The reverse effect and how the ‘AI bubble’ pops: Without sufficient returns on AI investments, the hyperscalers are forced to wind-down capital expenditures, the growing stock market declines, and the impacts will echo throughout the economy and political theater.

Luckily, the rise of Claude Code suggests that there is a demand for AI to justify the astronomical infrastructure investments. Anthropic’s run rate revenue (monthly revenue projected throughout the full year) has reportedly surpassed $30 billion in early April, up from just $9 billion at the end of 2025 and $14 billion in February, 2026. After the stunning subscription growth, Anthropic is now valued at $1 trillion pre-IPO on a secondary private market, surpassing OpenAI.

But how much of Anthropic’s revenue is real and who is really paying for it?

Anthropic receives billion-dollar investments from Google and Amazon, but a lot of it is paid in the form of cloud credits. This means you pay for a premium subscription to Claude, while Anthropic pays Google Cloud and AWS to host your Projects and conversations. Anthropic announces its revenue, but is not obliged to disclose the costs of running your queries, the models, or hosting your data. Nonetheless, the growing demand justifies further billion-dollar investments that never leave the balance sheets of Google and Amazon. The two companies own equity stakes in Anthropic too. In its earnings release from last week, Google’s parent company, Alphabet disclosed that nearly half of its record-breaking $62.6 billion profit came from the equity it owns in private companies, which is primarily Anthropic. Amazon’s concurrent earnings release directly states that first-quarter net income “includes pre-tax gains of $16.8 billion included in non-operating income from our investments in Anthropic”.

This kind of circular financing between AI startups and cloud providers was documented in a Federal Trade Commission report from January 2025. The arrangements blur how much net profits the AI startups are actually generating, and helps to attract more investments, which in turn prop up the value of the cloud providers equity stakes.

As the AI startups revenue increases, so does the computing costs although we don’t to which extent. Ed Zitron reported in September 2025, that Anthropic was spending more than $2.66 billion on computing costs to AWS alone on an estimated $2.55 billion in revenue. If we factor in the computing expenses Anthropic paid to Google during the same period, R&D costs, salaries to employees, etc., all evidence suggests that Anthropic was, and still is, operating at a major loss.

Whether or not the business model is sustainable longer term and can lead to profitability in 2028 as Anthropic projects remains anyone’s guess. The arrangement does appear shady, but as long as Anthropic continues to lock-in users by building dependency around its products and gradually increase subscription prices, the sky is not even the limit, since data centers can be built in space. However, the aggressive race towards exceptionalism and the current equilibrium between the financial and technological elites and political decisionmakers in the US could be disturbed and threatened by external factors. I can think of at least three such factors, two which are harming the business model of American AI, and one which is an existential threat combined with the others.

Keep reading with a 7-day free trial

Subscribe to Futuristic Lawyer to keep reading this post and get 7 days of free access to the full post archives.